On Friday, February 27, 2026, something happened that historians may one day mark as a turning point in the story of artificial intelligence, democracy, and warfare. An event as consequential in its own way as the morning Robert Oppenheimer watched the first atomic bomb light up the New Mexico sky and reportedly whispered, “Now I am become Death, the destroyer of worlds.” America has arrived at its Oppenheimer moment with AI. And the company at the center of it all is Anthropic, the San Francisco-based AI lab that built Claude and that just refused a direct order from the President of the United States.

What began as a contract dispute between a technology company and the Pentagon spiraled, within the span of a single extraordinary week, into a full-scale constitutional, ethical, and geopolitical reckoning over who gets to decide how artificial intelligence is used on the battlefield, in surveillance of American citizens, and in the machinery of war itself. By Friday afternoon, President Donald Trump had ordered every federal agency in the United States government to immediately cease using Anthropic’s technology. Defense Secretary Pete Hegseth had declared Anthropic a “Supply-Chain Risk to National Security,” a designation typically reserved for hostile foreign powers. And Anthropic’s CEO Dario Amodei had looked the most powerful government on earth in the eye and said, simply: no.

This is the full story of how it all happened.

The Contract That Started Everything

To understand how America arrived at this moment, you have to go back to last summer, when Anthropic struck a contract worth up to $200 million with the Pentagon to, in the official language of the agreement, “advance responsible AI in defense operations.” It was a landmark deal. Claude became the first AI model to be cleared for use on the military’s classified networks, a staggering achievement that reflected both the sophistication of Anthropic’s technology and the extraordinary trust placed in the company. The model was used, in coordination with data analytics giant Palantir, to provide AI services on the most sensitive defense and intelligence networks in the world.

The Pentagon, which uses Anthropic’s Claude AI system on its classified networks, wanted to be able to use it for “all lawful purposes.” But Anthropic had two redlines for the Pentagon: that Claude would not be used in autonomous weapons, and that it would not be used in the mass surveillance of U.S. citizens.

Those two redlines, no autonomous lethal weapons, no mass domestic surveillance, were baked into Anthropic’s acceptable use policy, and thus into the contract itself. For months, this arrangement worked. Defense officials praised Claude’s capabilities in conversations, with one admitting it would be a “huge pain in the ass” to disentangle.

Claude was deeply embedded in the military’s most sensitive operations. It was used in the operation to capture Nicolás Maduro and could conceivably be used in a potential military operation in Iran.

But something changed. The Pentagon decided it no longer wanted to accept those conditions. And so began one of the most extraordinary confrontations in the history of American technology.

The Week That Changed Everything

The collision between Anthropic and the Pentagon came to a head this week in a remarkable sequence of escalations, ultimatums, and defiance that unfolded like a geopolitical thriller.

The standoff came to a head on Tuesday at a high-stakes meeting at the Pentagon between Hegseth and Anthropic CEO Dario Amodei. While a source familiar with the matter said the meeting was cordial, Trump’s comments on Friday suggest the situation changed dramatically.

The Pentagon’s position, as articulated by its chief spokesperson Sean Parnell, was framed as a matter of simple practicality: the Department of War must have full, unrestricted access to Anthropic’s models for every lawful purpose. Officials insisted that the department had no intention of actually conducting mass surveillance or removing humans from weapons targeting decisions. But they also insisted that they would not accept being bound by a private company’s terms of service in defining what “lawful” meant in practice.

Then came Wednesday night. The Trump administration delivered its last and final offer to Anthropic, requesting that the company allow the department to access its AI model, Claude, for “all lawful purposes.” [The Hill](https://thehill.com/policy/technology/5759399-trump-bans-anthropic-tech/) The Pentagon also raised the stakes dramatically by threatening to invoke the Korean War-era Defense Production Act, a measure that could compel Anthropic to hand over an unrestricted version of Claude on national security grounds. At the same time, officials warned they would label Anthropic a “supply chain risk,” potentially blacklisting it from lucrative government contracts. The message was clear: either comply, or face consequences so severe they could threaten Anthropic’s very existence as a business.

On Thursday, Dario Amodei answered. And his answer was no.

“I believe deeply in the existential importance of using AI to defend the United States and other democracies,” Amodei wrote in a statement Thursday night, but “using these systems for mass domestic surveillance is incompatible with democratic values.” He made clear that the threats, the Defense Production Act, the supply chain risk designation, the loss of the contract did not change his position. “These threats do not change our position: we cannot in good conscience accede to their request,” Amodei said.

Emil Michael, the Pentagon’s undersecretary for research and engineering, shot back in a post on X on Thursday, accusing Amodei of lying and having a “God-complex.” “He wants nothing more than to try to personally control the US Military and is ok putting our nation’s safety at risk,” Michael wrote. The war of words was now out in the open and fully public. A Pentagon official and an AI CEO were trading accusations on social media about who was putting American lives at risk.

The Department of Defense set a final deadline: 5:01 p.m. ET on Friday, for Anthropic to drop its restrictions on its AI model from being used for domestic mass surveillance or entirely autonomous weapons.

The President Steps In

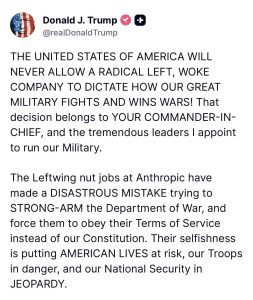

Shortly before that deadline, President Trump entered the fray with one of the most extraordinary social media posts of his presidency. Posted on Truth Social and immediately reverberating across every media platform in the country, the statement was Trump at his most combative, a full-throated assault on Anthropic, its leadership, and what he characterized as its ideology.

“THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS!” Trump wrote. “That decision belongs to YOUR COMMANDER-IN-CHIEF, and the tremendous leaders I appoint to run our Military.”

He went further, declaring that

“The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution. Their selfishness is putting AMERICAN LIVES at risk, our Troops in danger, and our National Security in JEOPARDY.”

And then the hammer fell. “Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology. We don’t need it, we don’t want it, and will not do business with them again! There will be a Six Month phase out period for Agencies like the Department of War who are using Anthropic’s products, at various levels.”

Trump did not stop there. He threatened that if Anthropic was not “helpful during this phase out period,” he would use “the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow.”

Minutes later, Defense Secretary Pete Hegseth followed with his own statement on X, designating Anthropic a Supply-Chain Risk to National Security. The implications of that designation were staggering in their scope. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. This was not merely revoking a contract. This was an attempt to build a wall between Anthropic and virtually the entire ecosystem of large American enterprise, because a significant portion of major U.S. corporations have some relationship with the Pentagon.

What “Supply-Chain Risk to National Security” Really Means

The designation that Hegseth slapped on Anthropic is worth pausing on, because it is not a trivial or routine bureaucratic label. Defense Secretary Pete Hegseth declared Anthropic a “supply-chain risk,” a type of designation usually reserved for companies thought to be extensions of foreign adversaries. Think about that for a moment. A label typically reserved for Chinese tech companies suspected of spying for Beijing has now been applied to an American AI company, founded by Americans, staffed largely by Americans, whose stated mission is the responsible development of AI for the long-term benefit of humanity.

The practical consequences are sweeping. The supply chain risk designation means that any company that works with the U.S. military would have to prove they don’t use Claude in their work with the Pentagon. Much of Anthropic’s success stems from its enterprise contracts with big companies, many of which may have contracts with the Pentagon. “It means that Anthropic’s existing customer base, some large portion of it might evaporate, either because they have government contracts or might want them.”

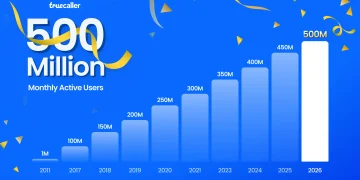

At a moment when Anthropic, valued at around $380 billion, is preparing for what would be one of the most significant IPOs in the history of the technology industry, this designation could not have come at a more fraught time. The $200 million Pentagon contract itself is a relatively small piece of the company’s $14 billion in revenue. But the ripple effects of being blacklisted from the government contractor ecosystem could be vast and lasting.

The Silicon Valley Solidarity That Changed the Story

What might have been a story about one company’s lonely stand against a government became something much larger on Thursday night, when a remarkable wave of solidarity swept through the technology industry.

OpenAI CEO Sam Altman told his employees in a memo on Thursday that the company would push for the same limitations on autonomous weapons and mass surveillance that Anthropic has, according to Axios. This was a significant move. Sam Altman and Dario Amodei are, in many respects, rivals. Their companies compete intensely. But Altman chose this moment to align himself with Anthropic’s position. “For all the differences I have with Anthropic, I mostly trust them as a company, and I think they really do care about safety, and I’ve been happy that they’ve been supporting our warfighters,” Altman said. “I’m not sure where this is going to go.”

Also on Thursday, more than 100 workers at Google sent a letter to Jeff Dean, the company’s chief scientist, also asking for similar limits on how the company’s Gemini AI models are used by the U.S. military, according to the New York Times. Tech workers, historically reluctant to take formal political stances, were now organizing around the question of autonomous weapons and AI surveillance in a way not seen since the Project Maven protests of 2018, when Google employees forced their company to abandon a Pentagon drone targeting contract.

Hundreds of employees from Google and OpenAI signed a petition in the past 24 hours calling on their companies to mirror Anthropic’s position.

The Oppenheimer Parallel

The physicist J. Robert Oppenheimer led the Manhattan Project and helped create the atomic bomb. After its use on Hiroshima and Nagasaki, he became one of history’s most conflicted figures, a man who understood, more viscerally than perhaps anyone alive, what it meant to hand a technology of mass destruction to the state and then watch it be used. He later opposed the hydrogen bomb. He was stripped of his security clearance. He was destroyed, professionally, by the very government he had served.

The parallel to the current moment is not perfect, no historical parallel ever is, but it is haunting. We are living in a moment when AI systems have become capable enough that their deployment in warfare, surveillance, and autonomous decision-making is no longer a science fiction scenario. It is the current policy landscape. And the question Anthropic is forcing the world to confront is the same question Oppenheimer eventually grappled with: when does the scientist, the engineer, or the technologist have a responsibility to refuse?

Anthropic’s position is that there are two things the technology simply cannot safely and reliably do in today’s state: power domestic mass surveillance, and function as fully autonomous lethal weapons. The Pentagon’s position is that those determinations belong to it, to the uniformed military and the elected Commander-in-Chief, not to an unelected CEO in San Francisco. These are not frivolous positions. Both sides have legitimate arguments. But the stakes of getting it wrong are not merely financial or political. They are, potentially, civilizational.

“Unlike many major defense technologies, today’s leading AI systems have been developed primarily in the private sector, by companies like Anthropic, OpenAI, and Google. The increasing capabilities of these systems have forced the Pentagon to bargain with Anthropic over its usage policies or opt for a less proven service.” This is the unprecedented reality we now inhabit. The most powerful military in human history has to negotiate with a five-year-old startup about the terms under which it can use the technology that may determine the outcome of future wars.

What Happens Next

The immediate practical consequences are significant and complex. The decision is also complicated for AI software firm Palantir, which uses Claude to power its most sensitive work with the military and will likely now need to strike a deal with one of Anthropic’s competitors.

As for replacements: Elon Musk’s xAI recently signed an agreement to let the military use its model, Grok, in classified systems. Sources say it’s unlikely to be a like-for-like replacement for Claude. Google’s Gemini and OpenAI’s ChatGPT are both available in unclassified systems, and the Pentagon is accelerating conversations about bringing them into the classified space.

But here’s the catch. Sam Altman has publicly said OpenAI shares Anthropic’s red lines on autonomous weapons and mass surveillance. “I don’t personally think the Pentagon should be threatening DPA against these companies,” Altman told CNBC.

If the Trump administration’s demand is truly for unrestricted access with no carve-outs for surveillance or autonomous weaponry, it may find that the same fight awaits it with every serious AI company, except, notably, Elon Musk’s xAI, which has already agreed to the Pentagon’s terms.

The question of whether Anthropic will challenge the supply-chain risk designation in court remains open. Anthropic has not yet said whether it will attempt to fight the designation in court.

Legal scholars are already debating whether a private company’s terms of service can be superseded by a presidential directive, and whether the Defense Production Act can legally be invoked to compel an AI company to remove safety guardrails from its products.

The government ban comes at a time when Anthropic is under heightened scrutiny, since the company is valued at $380 billion and is planning to go public this year. While the Pentagon contract worth as much as $200 million is a relatively small portion of Anthropic’s $14 billion in revenue, it’s unclear how the friction with the administration will sit with investors or affect other deals the company has to license its AI model to non-government partners. Anthropic CEO Dario Amodei has pointed out that the company’s valuation and revenue have only grown since it took a stand against Trump officials over how AI can be deployed on the battlefield.

The Deeper Question

Strip away the politics, the all-caps posts, and the name-calling, and what you are left with is a question that no society has ever had to answer before in quite this form: who governs artificial intelligence when it becomes a weapon?

In every previous generation of military technology, from gunpowder to dynamite, from tanks to nuclear bombs, the technology was developed by or for the state, and the state retained total control. The invention of the atomic bomb did not come with a license agreement from a private company saying “you may not use this for mass civilian casualties.” The bomb was the government’s bomb.

AI is different. The most capable AI systems in the world were built by private companies, with private capital, under private governance structures, and with their own terms of service. Those companies have, for competitive and ethical reasons, embedded safeguards into their products. And now the government wants those safeguards removed. Not because the government is necessarily planning to use AI for mass surveillance of Americans or fully autonomous killing, the Pentagon insists it is not, but because the government does not want a private company to have the power to tell it what it can and cannot do.

This is genuinely new territory. And the outcome of this fight, however it ultimately resolves, in the courts, in Congress, or in the market, will set precedents that shape the relationship between AI companies, democratic governments, and the machinery of war for decades to come.

America has arrived at its Oppenheimer moment. The technology has outrun the laws, the norms, and perhaps even the wisdom of the people wielding it. The question now is whether anyone, in the White House, in Silicon Valley, or in the halls of Congress, has the vision and the courage to get it right.

This article reflects reporting as of February 28, 2026. Developments in this story are continuing.*